I’m excited to announce the publication of a new article of mine in the Bulletin of the Atomic Scientists: “An Unearthly Spectacle: The untold story of the world’s biggest nuclear bomb.” As the title suggests, it’s about high-yield nuclear weapons. How high? “Very high” — which was the US jargon for weapons with yields above 50 megatons. It’s research I’ve been working on for many years now — you can see some of my early exploration into this on this post from 2012… how the time flies! — and I’m excited for it to finally see the light of day. (Let nobody ever accuse me of rushing to press too quickly…)

I had really wanted this article to be a visual feast, and I’m super pleased with how the Bulletin presented it. Special thanks to their multimedia editor, Thomas Gaulkin, for the nearly all-nighter I suspect he put in on this.

The article is really two intertwined histories. The first is about the development of the famous “Tsar Bomba,” the 100-megaton monster bomb tested (at half-power) on October 30, 1961. Everyone who knows about nukes has heard about the Tsar Bomba, but the histories of it in English have always had a sort of sketchy, judge-y quality to them. They’re about Soviet posturing and a little bit about Sakharov racing to complete the bomb, but I was really taken with the accounts one gets when reading Russian-language sources, which not only paint a much more colorful and human picture, but fill in a lot of interesting details. I wanted to make it something like a real history, with its deeper context. Of course, it’s hard to do that with the sources available in English, but a veritable bounty of internal histories and memoirs were produced in Russia at the end of the Cold War, and many of these are now (thanks to the Internet) very easily accessible (if you can read enough Russian to navigate them).

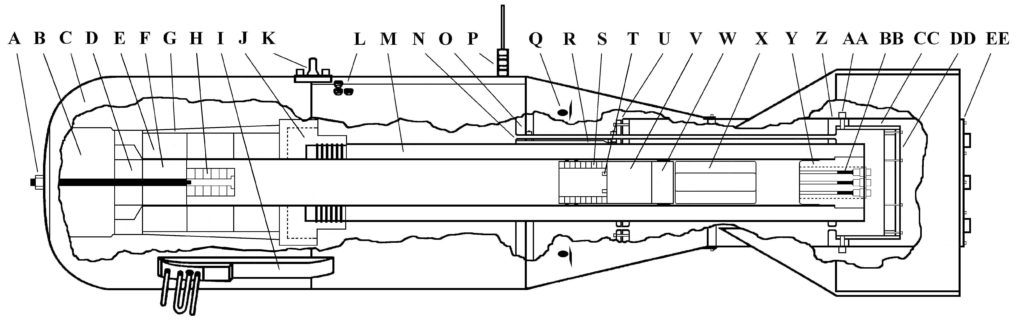

For example, the massive casing of the Tsar Bomba was developed for a totally different design in 1956 (RDS-202), which was just the stock H-bomb technology at the time with more fuel (it was an RDS-37 with enough fuel to get 20-30 megatons). This plan got scrapped, but the casing was kept in storage. Later, in 1961, the Arzamas-16 scientists decided that they could use their by then much more advanced H-bomb tech (they had a breakthrough in 1958 called Project 49, which seems to have really improved their efficiency) to make a 100-megaton bomb in the same casing as before (RDS-602). This work involves scientists who are important to the Soviet H-bomb project but a lot less famous than Sakharov, notably Yuri Trutnev (who recently died) and Yuri Babaev.

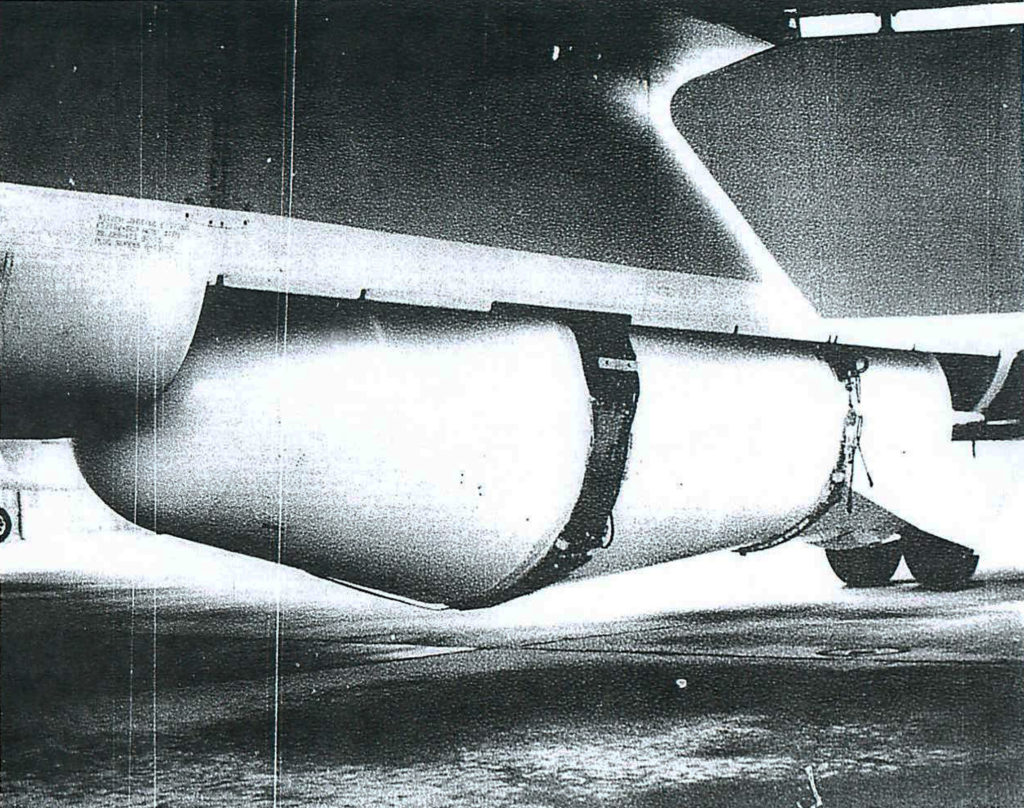

Attaching of the Tsar Bomba to the belly of its bomber in the still-dark hours before the test. The military did not want spotlights to be used while they did this, but the filmmakers charged with making the documentary about it explained they wouldn’t otherwise be able to see it. They were given exactly 20 minutes to shoot it. This account comes from the head of the documentary crew Vladimir Suvorov, who wrote a memoir (Strana Limoniya) in 1989, and is just one of the many Russian-language memoirs and official histories I used to pull together this new account (it is linked to in the BAS article’s footnotes).

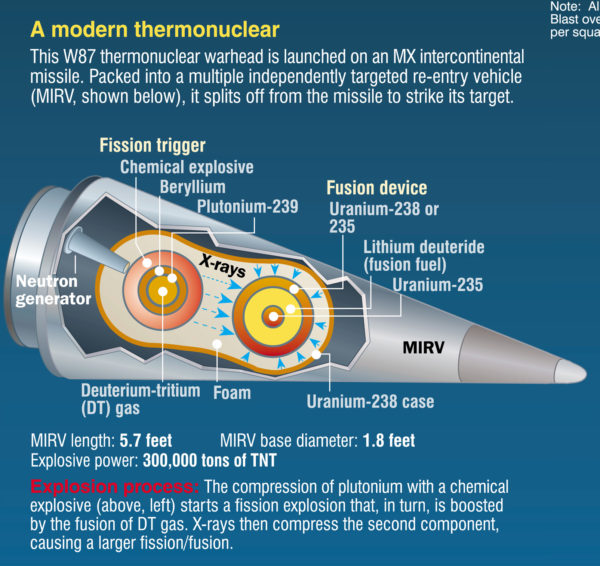

You can read the article for more, but I tried to write the history of the Tsar Bomba the way we would write the history of an American bomb development — not by just constantly pointing out the idiocy of the people involved, but trying to describe what they did within the context of their time and thinking. And I also stumble across some very interesting details about the internal design of the Tsar Bomba (it appears to have had two primaries, one at either end of the casing — this was totally surprising to me, since the common wisdom is that multiple primaries would be almost impracticably hard to synchronize).

The article then segues into the second history: the US response to the Tsar Bomba, and this is based on documents I’ve been collecting for a decade or more. Publicly, the US denounced the weapon, declared it pointless and exclusively political in nature, and said it didn’t need such things. Privately, in secret, it explored very seriously the idea of making 50-100 Mt bombs, and contemplated even higher yields (1,000 Mt and up). I contextualize this within US nuclear thinking about “very high yield” weapons, which goes back as far as 1944, but was extremely prevalent in the late 1950s, when the US pursued a 60-Mt bomb with some enthusiasm. And, as discussed previously on the blog, Edward Teller had long been interested in, as he put it in a classified meeting in 1954, “the possibility of bigger bangs” — weapons in the range of many gigatons.

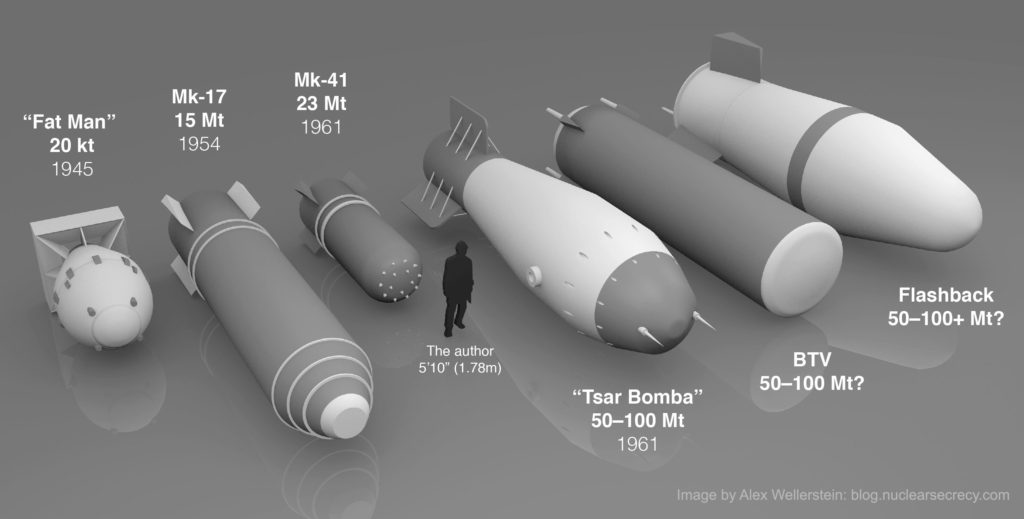

A rendering I made (in Blender) showing the relative size of various “superbomb” bomb casings (and a little silhouette of me, for scale). On the left are the biggest bombs (for their eras) that the US deployed, showing how dramatically the US was able to steadily pack megatons into less mass/volume over time. On the right are the “superbombs”: the Tsar Bomba, of course, but also the BTV and Flashback Test Vehicle, which represented different approaches to Tsar Bomba-range yields by the United States. These are bomb casings the size of small school buses. I was really pleased with how the render came out; fortunately, bomb casings are pretty easy to render as 3D models (they are essentially just deformed cylinders, and are basically symmetrical).

One of the really interesting things that I was able to integrate into the story are two remarkable bomb casings called the Big Test Vehicle (BTV) and the Flashback Test Vehicle (FBTV). These were brought to my attention some years back by Scott Lowther on his blog. They are just ridiculously large casings, and the files made it clear that they were associated with Restricted Data, but other than that it was hard to parse out exactly what they were.

I am excited to have figured it out. Basically, after all of the discussion about making 50-100 Mt bombs in the early 1960s, the US decided that it would rather sign the Limited Test Ban Treaty, even if that meant that they would be missing out on “very high yields.” (It’s extremely hard to test a bomb that is only 1 megaton underground — the depth of burial requirement to avoid venting is very deep, but it’s doable if you really want to. It is totally impractical to test a 50- or 100-Mt bomb underground.) But they really feared that the Soviets would ditch the LTBT without much notice, like they did with the Test Moratorium in 1961. So part of their “safeguards” against the Soviets doing this was to have a program they called “Readiness to Test,” later abbreviated as just “Readiness,” which meant that they would be able to test a lot of nukes within 90 days of the Soviets breaking the LTBT. This was seen as a deterrent.

The BTV and FBTV were part of the Readiness program, developed by Sandia. They were essentially ballistic casings, fuzing arrangements, and parachutes that a nuclear device could very quickly be inserted into. So the US could prepare to atmospheric test without actually testing: they’d be able to make the measurements they wanted and do the logistics of dropping the bombs and so on without a lot of prep time, should the decision to test go forward. Sandia developed a lot of interesting hardware for the Readiness requirement: the UTV (Universal Test Vehicle) was basically a B-53 casing that could have other devices swapped into it; the CTV (Companion Test Vehicle) was a sort of mini-bomb casing that could carry diagnostic equipment; the EMPTV (EMP Test Vehicle) carried EMP-related diagnostic equipment. (They also did a whole slew of other Readiness-related work, like preparing to test nuclear weapons in outer space. It was a big program.)

The impressively unwieldly-looking Big Test Vehicle (BTV). Just a monster of an ugly bomb casing design, optimized for perfectly fitting into a B-52’s bomb bay. Photo is from Sandia National Laboratories.

The BTV was created as the largest bomb that could fit inside of a B-52 bomb. It’s a pretty ugly thing, and it’s hard to imagine the ballistics were anything other than horrible. It existed only so they could test truly gigantic weapons, ones that could not fit inside the (already very large) UTV casing. In terms of timing, it was commissioned at exactly the same time that the AEC declared that they could make a bomb with pretty much the exact same dimensions at 100 Mt. So it seems pretty clear that this was part of their “hedge” for testing a 50-100 Mt bomb design.

The Flashback Test Vehicle was definitely part of such a program. It was created as part of something called Project Breaker, which is such an annoyingly common word in nuclear/technical matters that it fouls search queries pretty effectively. But I managed to piece it out using the journals of Glenn Seaborg:

1. “Breaker.” Howard read a proposed letter to the President seeking the approval of air drop ballistic tests of a ballistic a ballistic shape for [a] very high yield nuclear weapon. Howard explained that the matter had been delayed by revision by him of the letter to make it clear that the ultimate development and tests of this particular weapon for B-52 or B-70 delivery could justified primarily on the effects information which such a test would provide — that is, the effects of such a weapon if used against the U.S. Presumably such a test would also develop counter-measure information. Howard said that the original letter prepared by the Joint Chiefs of Staff had endeavored to justify the test on the grounds of the need to develop such a weapon for use in the U.S. stockpile, but that Howard felt, upon careful examination, that the reasons were not persuasive and that a good case for an offensive weapon was not made. The Commissioners concurred again in the desirability of a ballistics test, noting that the sheer size and shape of the ballistics dummy bomb would almost certainly result in being seen and in there being some speculation about it. Therefore, they felt it was probably necessary, for that reason as well as others, to clear the matter with the President. In addition, however, I expressed the concern that the way the letter now read it tended to imply that perhaps a decision was being taken on the question of a large weapon, when in fact this drop test program constitutes and expedient quite apart from a basic policy decision; and that it would be better if the letter were to identify the need for a policy decision, noting that a research and development and testing program that would be involved in the event that such a policy decision were taken in the affirmative.

It should be made clear that project ‘Breaker’ does not imply a decision to actually build and test a B-52–deliverable nuclear effects device.” (Later Howard re-drafted a paragraph in long hand, and read it to the Commission and received their approval.)

This is from late 1963 or early 1964 (the ambiguity is because my only access to this is through Google Books’ Snippet View, and it doesn’t let me see; I have tried to explain to Google that this is a government document and in the public domain, but apparently the bots that monitor those inquiries weren’t convinced). But it clearly indicates that the FBTV was part of maintaining the possibility of testing a “very high yield” nuclear weapon (which is code in this period for 50-100 Mt; for even higher, they sometimes used the term “ultra-high yield”). The FBTV was large-enough that it didn’t fit inside a B-52; you had to remove the bomb bay doors. Ironically, this is exactly what the Tsar Bomba required, though the US probably didn’t know this in the 1960s.

The Flashback Test Vehicle, hanging inside a B-52 that has had is bomb bay doors removed to accommodate it, as part of the Readiness program. I don’t know whether I find Flashback or the BTV more ridiculous looking. Flashback at least looks like a bomb… but its size is just absurd, and it is almost comical how generically bomb-like it is. It makes the Tsar Bomba’s casing look somewhat reasonable.

Anyway, they did several exercises with boring names (Operation Paddlewheel is one of them) with the BTV and FBTV, basically checking if they could drop them successfully and also take a lot of photographs and diagnostics of them (they did things like take simulated flash and fireball measurements), so that if in the future they were asked to make a 50-100 Mt bomb, they could do so quickly. President Johnson seems to have put off making a decision on whether he might want a 50-100 Mt bomb; my guess is that he got bogged down with other things (Vietnam). As an aside, by the late 1960s, Sandia was suggesting that they could just fill the BTV up with conventional explosives and drop it on the Vietnamese, which gives some sense that they were beginning to suspect they were never going to use it for a “very high yield” weapon. By the late 1970s the idea was 100% dead, and Sandia donated some of the specialized equipment they made to move the BTV to NASA, which also needed to move large things.

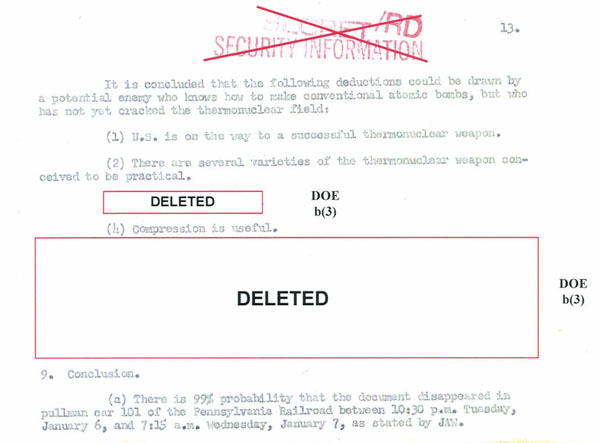

I’m proud of this article and I hope you enjoy it. I really love a good mystery and piecing together this history has really been that — it’s an area that is still very redacted despite the fact that there doesn’t seem to actually be a lot of interest in “very high yield” nukes after the 1960s (though some have speculated that the Russian “Poseidon” nuclear drone might be something like that, so who knows). So it’s required quite a lot of reading-between-the-redacted-lines, finding multiply-, differently-redacted copies of documents, and following code names through different contexts (e.g., Flashback to Breaker).

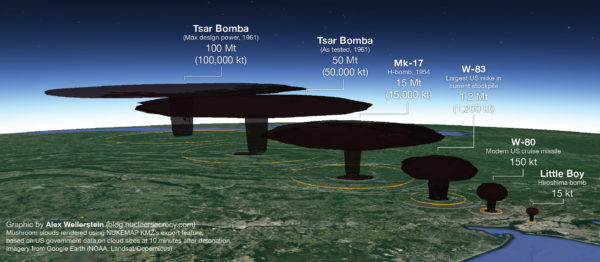

This is one image that I worked on for the article but we ultimately decided not to use, because it didn’t really fit and (in my opinion) the color scheme is kind of jarring (it would be nice to remake it with different color data). But I figured I would share it here. You can use NUKEMAP to export KMZ mushroom clouds (in the Advanced Settings) that can be opened in Google Earth Pro, so I rendered the mushroom clouds of various sizes of relevance. (I included the W80 and Mk-17 in part just to show what happens as you increase orders of magnitude from 15 kilotons).

It’s not the end of this story or line of research — there’s more to say about both the Tsar Bomba and “very high yield” nuclear weapons pursuits by the US — but with the 60th anniversary of the October 1961 test looming, it felt like it was the time to try and push out some of it. I’ve also tried to make almost every aspect of the documentation available to readers, with lots of documents linked-to in the article footnotes. And the Russian-side of things could not have been accomplished without the amazing book library put up by Rosatom a few years back. I’d also like to thank Scott Lowther, Carey Sublette, and a former undergraduate research assistant, Ksenia Holmes (who helped me trawl through many of the Russian sources, but any translation errors are mine alone), for their various contributions and assistance.

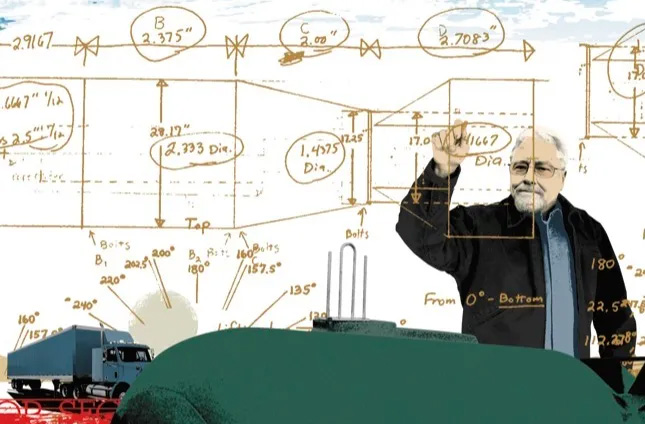

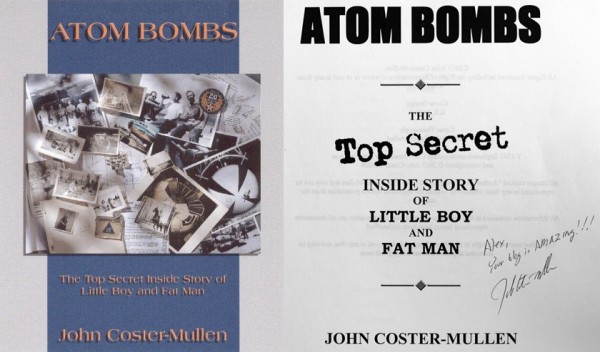

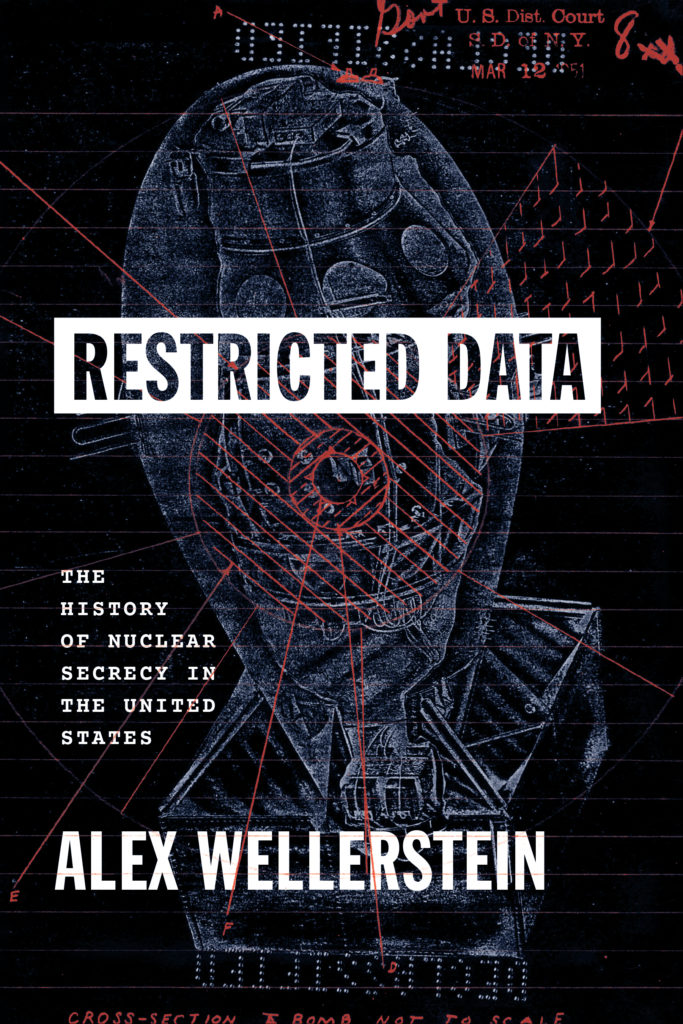

In a little over a week is the 10th anniversary of this blog, if you can believe it (I can barely believe it). I will be posting something about it, so watch this space. Don’t worry — I’m not announcing a blog retirement! Also, if you want a signed copy of my book, the arrangement I’ve made with my local bookstore, Little City Books, is still (as of Fall 2021) active.