Post archives

Filtering for posts tagged with ‘1960s’

2021

October 2021

Redactions

The untold story of the world's largest nuclear bomb, the Tsar Bomba, and the secret US efforts to match it.

2018

May 2018

News and Notes | Visions

A new exhibit on the first nuclear-armed submarines is opening this week at the Intrepid Museum in New York.

2016

July 2016

Meditations

How the US came to have three major strategic nuclear platforms, and why it started calling them a "triad."

2015

June 2015

Visions

The Soviet space dogs were more than just adorable mutts — but they were those, too.

2014

September 2014

Visions

The impressive ugliness that almost became the emblem of the International Atomic Energy Agency.

July 2014

Redactions

Would the bomb have won the war? A JASON report on the question from 1967 explores the limits of tactical nuclear weapons.

May 2014

Visions

What did Oppenheimer mean when he famously said, "Now I am become death, destroyer of worlds"? A foray into the Bhagavad-Gita.

April 2014

Redactions

A review of Eric Schlosser's new book, Command and Control, and thoughts on why the history of nuclear weapons accidents is hard to write.

2013

December 2013

Meditations

By looking at the trends of yield-to-weight ratios, we can peel back the veil just a tiny bit on nuclear weapons design trends.

Visions

Should films of nuclear detonations be put in art galleries?

October 2013

Redactions

The 37th President had a strange relationship with nuclear weapons — he didn't think they mattered very much.

Redactions

Who told Werner Heisenberg that an atomic bomb might be dropped on Dresden? Plus: another curious wartime leak.

September 2013

Redactions

New details about a nuclear weapons accident makes it clear how close we came to an accidental, full-yield, megaton-range detonation.

August 2013

Meditations | Visions

I thought I knew a lot about nuclear fallout, but digging into the details taught me some subtle but important points about how it worked.

January 2013

Visions

An investigation into the graphical history of the UN nuclear watchdog.

Meditations

Herman Kahn on the relationship between price and control, and what it might mean for the biological revolution underway.

2012

December 2012

Visions

Decorating a tree, Hanford-style.

Visions

How does one recruit nuclear weapons designers? In the 1950s, you could just take out ads in popular magazines.

November 2012

Meditations

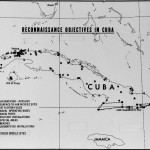

Are there new things to be said about the Cuban Missile Crisis after 50 years of talking about it?

October 2012

Meditations

Contrary to popular perception, Kennedy's "quarantine" of Cuba during the Missile Crisis wasn't a complete success — there were already lots of nukes there.

Meditations

A new memoir about living next to Rocky Flats and what its perspective might be able to do for nuclear historians.

July 2012

Visions

A step-by-step guide to firing the Davy Crockett, the "atomic bazooka," and the smallest nuke in the Cold War US arsenal.

June 2012

Meditations

Two new articles on centrifuge history shed important light on US-UK nuclear interactions in the Cold War, and the problem of proliferation.

May 2012

Redactions

Gas centrifuges posed a tough problem for the US in the 1960s, perched in between fears of proliferation and the desires of industry.

Meditations

Gas centrifuges could have been made to work during the Manhattan Project, but they didn't figure out how.

Redactions

Why Norris Bradbury didn't want to build the bomb... again. And what they ended up eventually doing about it.

April 2012

Visions

A selection of Time magazine covers featuring nuclear weapons topics over the decades.

January 2012

Visions

Is "leak" a good metaphor for unofficial information flow? Probably not.

Redactions

A close read of what the famous 1967 report on the ease of proliferation actually says -- and doesn't say.

2011

December 2011

Redactions

Can something be secret even if it is wrong?