Post archives

Filtering for posts tagged with ‘Language’

Showing 1-3 of 3 posts that match query

2012

August 2012

Visions

When "atomic" beat "nuclear," and when we stopped defining our age by our reactors.

April 2012

Meditations

People are often calling for a "new Manhattan Project," but does that make any sense?

January 2012

Visions

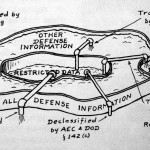

Is "leak" a good metaphor for unofficial information flow? Probably not.

Showing 1-3 of 3 posts that match query