Post archives

Filtering for posts tagged with ‘Leslie Groves’

2020

June 2020

Meditations

As we approach the 75th anniversary of the atomic bombings, we're going to see a lot of journalistic takes on them — many of them totally wrong.

2017

May 2017

Redactions

Was the first history of the atomic bomb biased towards physics to avoid public associations with chemical weapons? My take on a recent article.

2016

September 2016

Redactions

If he had lived to make the decision, would Roosevelt have dropped the atomic bomb?

March 2016

Meditations

If you could talk about secrecy with the former head of the CIA and the NSA, what would you say?

2015

December 2015

Meditations

What caused the atomic spies of Los Alamos to do what they did? Somewhere in the zone between ideology and ego, monsters live.

November 2015

Redactions

At what point did the Manhattan Project scientists and administrators realize they weren't in a race with Nazi Germany after all?

Visions

The popular version of Oppenheimer at Los Alamos is one of infinite competence, confidence, and charm. The reality is far more complex.

October 2015

Redactions

One of the most unusual, curious, and controversial members of the Manhattan Project was their in-house newspaperman from the New York Times.

Redactions

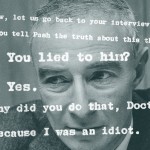

How far would Manhattan Project security go to deal with a problematic genius?

March 2015

Redactions

In 1945, some scientists thought we should "demonstrate" the bomb to Japan before dropping it on a city. Others disagreed. Who was right?

January 2015

Redactions

What do the newly released Oppenheimer transcripts tell us about the security hearing, and its original redaction?

2014

September 2014

Redactions

When the head of the Manhattan Project had questions about the history of the atomic bomb, he had a special, secret place to look for answers.

February 2014

Redactions

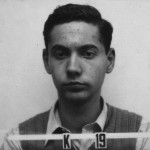

In a short story published in 1949, Leo Szilard contemplated how well he and President Truman would fare at a war crimes tribunal. His conclusion: not well.

2013

November 2013

Meditations

A portrait of a year in flux, when the possibilities of a new order moved from the limitless to the concrete.

Redactions

How many people did it take to make the atomic bomb? Probably many more than you realize.

October 2013

Redactions

Who told Werner Heisenberg that an atomic bomb might be dropped on Dresden? Plus: another curious wartime leak.

Redactions

If the first atomic bomb had been ready in 1944, would it have been used against the Nazis? Surprisingly, Roosevelt may have been interested in doing it.

September 2013

Redactions

How did an article about the work at the Los Alamos laboratory come to be published in March 1944?

Redactions

Are there any indications that the Germans penetrated into the secrecy surrounding the American atomic bomb project during World War II? Not many.

Redactions

During World War II, the United States didn't just fear a German atomic bomb, but also a German dirty bomb. But secrecy made acting on such fears difficult.

August 2013

Meditations

Why was a second bomb used against Japan, so soon after Hiroshima? A review of several theories.

May 2013

Meditations

Of the $2 billion spent on the Manhattan Project, where did it go, and what does it tell us about how we should talk about the history of the bomb?

April 2013

Meditations | Redactions

Blacking something out is only a step away from highlighting its importance, and the void makes us curious.

March 2013

Meditations

How many ways are there to talk about secrecy? Quite a few.

Meditations | Redactions

Did atomic secrets kill Lt. Col. Paul P. Stoutenburgh?

Meditations | Redactions

Where do historians stand, in the 2010s, about the decision to use the atomic bomb? A report from a recent workshop.

2012

October 2012

Redactions

Did Truman know about the radiation effects of the atomic bombs before they were used? Does it matter?

August 2012

Visions

The security badges produced for the Los Alamos project present a dizzying display of the many people who worked on the atomic bomb.

Redactions

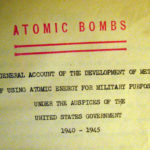

What the first technical history of the atomic bomb did — and didn't — say about the work of designing the bomb.

Visions

American newspaper front pages from the first five days of the atomic bomb's public debut.